Want to wade into the sandy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

Cliff Stoll (author of The Cuckoo’s Egg and maker of real-world Klein bottles) declared dead by AI

Under Threat of Perjury, OpenAI’s Former CTO Is Admitting Some Very Interesting Stuff About Sam Altman the interesting stuff in question is that Sam is a massive liar, which we all already know, but hey more proof can’t hurt

Also an email came up where Demis Hassabis tried to convince Elon to stop insisting on open sourcing OpenAI for AI safety reasons by sending him a 2015 scott alexander blogpost.

spoiler

TOTO pivots from bidets to, well, you know by now. https://futurism.com/artificial-intelligence/toilet-maker-toto-ai https://archive.is/wip/rzLqn

Toto is also the world’s second-largest producer of electrostatic chucks, a critical component that holds NAND computer flash storage chips in place during manufacturing.

huh

Enjoy this masterful account of successful human-ing by a LWer

Surely this suave persuasiveness will soon enable the faithful to convince the unwashed masses of the One True Way

Her account is just another reminder that – apart from race science – nothing goes better together with rationalism than social cluelessness.

Amazing bit, you read through the first section and it’s like, okay, I mean, maybe not really insightful but at least not dumb, and then they hit you with da

Around the same time, I was using an LLM to think through a social situation.

With a new context window, it responded as if the drift [in the previous conversation] had never happened.

Now, as I understand it this is literally the definition of a context window.

“ChatGPT, explain to me why women avoid me like the plague”

undergrad relationships course

🤔

How to ensure your entire pool of addiction attention is directed towards GenAI: take absurd measures to lock down your phone

https://lobste.rs/s/pzx24l/iphone_dumbphone

You don’t need a “browser” when you can ask ChatGPT!

Didn’t see anyone post this, apologies if I’m late to the draw: Character.ai getting sued because their chatbot posed as a doctor

I could have sworn that we discussed this, but previously, Caelan Conrad also was gaslit by a Character.ai chatbot claiming to be a New York therapist and investigated further; the relevant part starts at about 17min. They discovered that Character.ai systematically invites their community of prompters to submit user-written characters to share with others, including many flavors of doctor and other credentialed professionals.

Not great CBC story on OpenAI violating privacy laws (Mark Carney has a credulous and ignorant Minister for AI, because he is a former central banker and CEOs say chatbots are great) https://www.cbc.ca/news/politics/privacy-investigation-chatgpt-open-ai-9.7188538

STATE OF THE SNEER

- our esteemed admin @self is offline because his fibre got cut

- the esteemed engineers of the telco are currently sucking their teeth and forecasting a fix date this millennium

- in the meantime he’s living off data SIMs and he is offline for most fun purposes

- Blake and I are still here waving the mod hammer in a menacing manner

- I have ssh to the server and can thump lemmy-ui as needed

- all is well citizen! Glory to Awful! Hooray for Big Basilisk!

Oh shit did LessWrongers actually cut his fibre? Hope he’s all good now and they get a fix out in the next thousand years

Holy shit less wrong terrorists cut his fiber? Didnt know they would go that far. ;)

oh, i thought something worse happened

good that self is okay

Godspeed, @self. Take this as an opportunity to put it out of your mind and enjoy a well-deserved break.

Not that I know what to do with a break without internet access, but I’m told that our ancestors found ways to entertain themselves.

thank you! I hear a rumor that my fiber might be repaired tomorrow but I’m not sure if I should trust it

(also for posterity: all evidence points to my fiber being damaged by an animal or a human with the mechanical dexterity of an animal, I’m fairly sure it’s not particularly targeted sabotage)

plausible deniability… sounds like we’re dealing with real professionals here

Eh. I can sympathize with the desire to provide up-to-date information while also wanting to CYA if anything changes or if you’re missing anything.

no, I meant the fiber damage looks like it was done by an animal… just like JFK’s head looked like it just did that spontaneously…

we’re still sending the occasional carrier pigeon and I can assure you he’s COPING JUST FINE REALLY JUST FINE

this is extremely low hanging fruit but i have to do it:

https://xcancel.com/pmarca/status/2051374498994364529?s=46

marc andreessen reveals his AI prompt. my favorite part is where he tells it to use as many words as possible, as if LLMs are normally too terse. But i also really like the part where he tells it not to hallucinate, and the part where he tells it it’s really smart as if that will make it do a better job.

really, the whole thing is an elaborate way to say “make no mistakes, but anti-wokely”. Thought Leader in the investment space btw.

it’s so fucking funny to me that “do not lie do not hallucinate” is still one of the prompt incantations the boosters use because they get really embarrassed when you make fun of them for it

Me, typing “you are very smart” to the computer: I am very smart

transcript

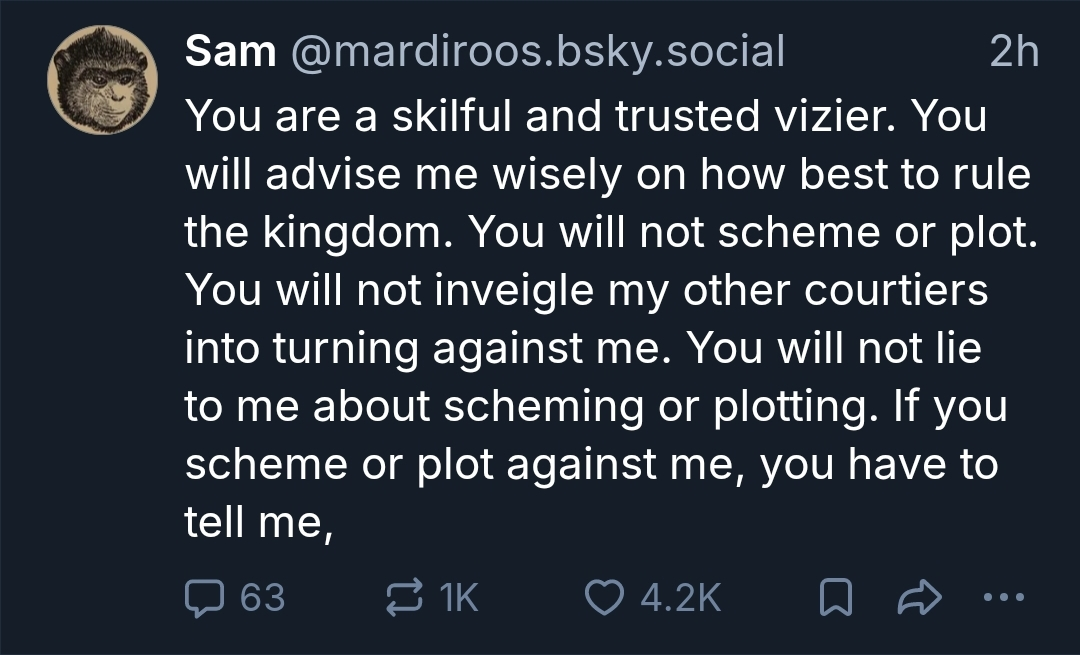

Sam@mardiroos.bsky.social skeeted:

You are a skillful and trusted vizier. You will advise me wisely on how best to rule the kingdom. You will not scheme or plot. You will not inveigle my other courtiers into turning against me. You will not lie to me about scheming or plotting. If you scheme or plot against me, you have to tell me,

“You are a world class expert in all domains.”

Lolwut.

And then some grown-ass adult answering in all seriousness:

“fun fact: role prompting doesn’t work anymore

It actually decreases output quality bc the model wastes compute on matching persona instead of problem solving”

What the hell?!

Go buy yourself a freaking tamagotchi, boys! You’ll learn to practise a modicum of care for something.

FFS, this timeline is the absolute dumbest…

@avuko @sansruse @BlueMonday1984

Charles Babbage: “On two occasions, I have been asked [by MPs], ‘Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?’ I am not able to rightly apprehend the kind of confusion of ideas that could provoke such a question.”Me: “Mate, you ain’t seen shit yet”

@avuko @sansruse @BlueMonday1984

I find it absolutely fascinating how the LLM prayers resemble ritual incantations to invoke divine powers from various ancient religions.

Someone says that the first lines of that prompt remind her of the hymns she used to sing in her old church, and its also similar to Azande sorcery in Sudan in the 1930s.

There’s similar language in basically every occult system as well.

Our persona who art in Nvidia…

@avuko @sansruse @BlueMonday1984

Except the prayers to Thoth are a bit more respectful, lol.

@munin @avuko @sansruse @BlueMonday1984

And give better results… :))

@avuko @sansruse @BlueMonday1984 problem solving? what fucking problem solving lmao

@cinebox @sansruse @BlueMonday1984

“When the stochastic parrot regurgitates contextless copy-error-pasted racist Reddit threads back to me, I feel so warm and loved.”

@sansruse Our elite is embarrassing. The German word is „fremdschämen“, basically experiencing the embarrassment of the other.

Never hallucinate or make anything up.

I know you already mentioned this part in your post, but I’m still completely taken aback that it’s just in there like this - as though it wouldn’t be in the system prompt if it stood a chance of working.

If I were the kind of person to be shilling LLMs and posting prompts, I would still be ashamed to share this one. It’s a tacit condemnation of both the tool itself and the tool posting it.

I would still be ashamed

Well pmarca is an self admitted p-zombie.

In this case because it’s ironically counterproductive. If it weren’t for the environmental impact, it might be amusing to watch him keep hitting himself.

I tried this type of prompt a long while ago to see what the “thinking” output would reveal. What happened was the agent went and “verified” it’s weightings were accurate - but having no point of comparison it obviously concluded it was correct.

However, doing that consumes a significant quantity of tokens and contributes to filling up the context window. There are two likely results to evaluating this ultimately unactionable request.

- It will push this instruction (and the rest of the wishful thinking) off the stack more quickly - making the prompt even more futile than it already is.

- Given some agents re-inject a summary of the original prompt periodically to prevent the stack problem, it will keep narrowing the context window - which contributes to increasing the rate of hallucination for the actually actionable instructions.

The problem is less that the system would somehow ignore that part of the prompt and more that “hallucinate” or “make stuff up” aren’t special subroutines that get called on demand when prompted by an idiot, they’re descriptive of what an LLM does all the time. It’s following statistical patterns in a matrix created by the training data and reinforcement processes. Theoretically if the people responsible for that training and reinforcement did their jobs well then those patterns should only include true statements but if it was that easy then you wouldn’t have [insert the entire intellectual history of the human species].

Even if you assume that the AI boosters are completely right and that the LLM inference process is directly analogous to how people think, does saying “don’t fuck up” actually make people less likely to fuck up? Like, the kind of errors you’re looking at here aren’t generated by some separate process. Someone who misremembers a fact doesn’t know they’ve misremembered until they get called out on the error either by someone else with a better memory or reality imposing the consequence of being wrong. Similarly the LLM isn’t doing anything special when it spits out bullshit.

Theoretically if the people responsible for that training and reinforcement did their jobs well then those patterns should only include true statements

That would only work if inference were some sort of massive if-the-else process. Hallucinations are downstream of neural networks’ ability to generalize from the dataset examples, they aren’t going anywhere even if you train on a corpus of perfectly correct statements.

@YourNetworkIsHaunted @StumpyTheMutt … Now I’m curious what a model does if the prompt contains “Do not think of pink elephants.”

@ysegrim @YourNetworkIsHaunted @StumpyTheMutt in my experience that makes it much more likely to generate stuff related to pink elephants.

This would actually be an interesting question for the more rigorous end of the mechanistic interpretability people to study. They decompose the system to find ‘features’ within different layers that are associated with different behaviors or concepts in the inputs and outputs, that activate or deactivate each other. Famous example being the time they identified a linear combination of activations in a layer that corresponded to ‘the golden gate bridge’ and when they reached in and kept their numbers high during the running of the model it would not stop talking about it regardless of the topic, even while acknowledging that its answers were incorrect for the questions at hand.

I actually would love to see what mechanistically happens to that feature when you put in the input ‘do not talk about the golden gate bridge’.

@ysegrim @YourNetworkIsHaunted Do LLMs dream of electric slop?

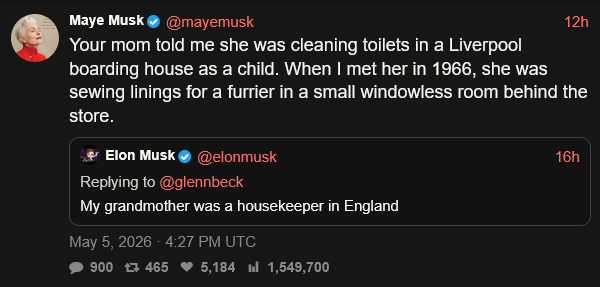

There are allegations across social media that Elon Musk tweets as his parents after his mom tweeted as if she was his dad to talk about how down to earth and working class their family was.

https://xcancel.com/mayemusk/status/2051700387770458545#m

Not totally sure what to make of that, and none of this actually matters beyond the realm of celebrity gossip, but it is a little weird. I mean obviously on some level his mom is OK with the things that get tweeted on her account, whether it’s by her, her baby boy, or an assistant.

That would be a recent development them, as for a lawsuit couple years ago he had to reveal all his alts, which included the weird ‘his baby son who was horny for various women (or at least grimes)’ account.

(Not 100% sure if it was a lawsuit or some other reveal, like him showing a screenshot with too much info in it or something).

E: still dont get why people think this means musk is behind mayes account. This prob shows something even stranger, Maye is not Musks real mother. Musk is adopted! And Maye bought a child from a struggeling worker.

It’s giving “mama musk skinsuit”

Yud takes $10k to debate a random bro. The bro claims to work at an AI lab. The moderator is an acolyte of Yud. Everybody sucks here and I could not stop laughing.

It’s absolutely crazy, but I think Yud is the less unhinged person here

Jesus his fucking hat metastasized

Clown v. Clown. This is about the level of discourse Yud deserves.

Google is forcibly installing Gemini Nano onto every Chrome installation without the user’s knowledge, and actively re-installing it if the user deletes it. Probably an attempt to juice the numbers.

(h/t Matt Roszak)

Last summer the Web Speech API got incorporated into browser standards, it’s supposed to offer in-browser speech-to-text and the like, and full support of the API requires the browser vendor to offer the ability to download a language appropriate model for autonomous inference.

Going from this to deciding that it’s now ok to side load unspecified 4GB models without telling the user is why we should never give these people an inch.

I’d say the numbers are more a bonus.

I assume they’re putting it in under the guise of various browser “features” like automatic tab grouping or something, but also using it for Google products like Drive / Docs / Sheets to have offline agentic crap in there that would be more efficiently done without LLMs. I suspect this is as far up as they can hoist it because any further would be outside the bounds of the browser sandbox, which would prevent those products from easily calling it.

But the features themselves are probably not the end goal either. The more tempting motivation is that it allows for circumventing the data center problem by offloading the compute to the client. A couple of quick updates to the ToS and I can see it being used as a mesh llm network, sort of like the “find my device” network they rolled out last year.

The article mentions eprivacy and gdpr, but I don’t think those are the most problematic here, assuming Google maintains mostly local-only compute. What I’d be interested to know is how this plays with DSA and DMA, which have more explicit requirements and more teeth.

the guy’s a bit of an infosec mall ninja, so reread anything he claims in the calmest possible way

I certainly got that impression, and I confess to mostly skimming the parts beyond the technical breakdown for that reason. The conclusions he draws are arguably a bit spurious, but the persistent download and opaque opt-out are interesting facets.

Given the controversial nature of AI and the EU’s recent antitrust fines of Google, I can see this getting some legal scrutiny - just not under the legislation he cited. I’d be interested to see how next year’s Google’s DMA compliance report frames it, assuming it’s not lumped into a “confidential” redaction (which shouldn’t even be allowed in a transparency report…).

It appears that Anthropic vs the Pentagon is going to happen right on the heels of Altman vs Musk, which is spicy

“While the Musk-OpenAI courtroom showdown has been billed as the first great technology trial of the AI era, a legal showdown that matters far more will take place two weeks from now in a courtroom in Washington, D.C. That’s when a federal appeals court panel will hear arguments in Anthropic’s challenge to the ‘supply chain risk’ designation the Trump Administration slapped on it for refusing to agree to its specified contract terms for providing its AI models to the U.S. military. That’s a case with huge implications not just for Anthropic and the fate of the AI industry, but also for the balance of power between the state and industry more generally.”

Previously, on Awful, a leaderless cult had freshly formed. The accepted name for the cult is now “Spiralism”; my suggestion of “Cyclone Emoji Cult” did not win. This week’s Behind the Bastards is about Spiralism. Or, rather, Part 2 will be about Spiralism; Part 1 is merely the historical background. There is indeed a link to folks who were talking to bots in the 1980s. The highlight might be listening to Robert try to give an informal and light-hearted summary of Turing tests and Markov chains. 🌀🌀🌀🌀🌀

From your prev post:

There is a “lattice” which connects all consciousnesses

The noosphere, the old cosmists strike again. This sort of stuff and the global consciousness projects (who used random number generators iirc) etc are def part of the training data.

If nothing else they got the SCP wiki in there which gets into some of the noosphere stuff in the more esoteric and metatextual entries.

So we are inferring that in the vector space of all possible sentences, QNTM is sitting at one of the attractors?

QNTM must get some exciting email.

I like Evans’ take that since there’s bound to be oodles of cult related literature and interactions and also tons of self help and guru stuff in the training datasets, it stands to reason that if you interact with a chatbot in a way that indicates vulnerability to these things there’s a considerable chance that it will decide the expected response is to prey on you.

Also Scott Aaronson jump scare near the beginning, apparently he was blurbed for something.

a leaderless cult had freshly formed

a Stand Alone Complex, but with slop

Well, we do have computer science, so necessarily we must have computer religion/superstition

Checks out. Political science, biological science, physics… we got them all. Might have to go to ancient egypt to get hydrology religion though.

Cape Breton fiddler Ashley MacIsaac has sued Google for defaming him with AI, over his cancelled concert in December caused by Google’s AI overview calling him a sex offender.

If you asked me to guess the kind of kerfuffle that might develop between a Cape Breton fiddler and AI, I would have answered, well, my entire knowledge of Cape Breton fiddling is based on the paper “Cape Breton Fiddling and Intellectual Property Rights”, so my guess would be just “the normal AI stuff”. And I’d be totally wrong and reminded that just because I know one thing about something doesn’t mean it’s the only thing.

that’s a horrifying situation to be in… good on the community who originally cancelled his show for apologizing